| Updated 22.07.23

Why AI gave us all whiplash

I’ve had to do a few presentations at work since I won the internal AI competition, so I’ve been thinking more on about how to communicate artificial intelligence to people who may or may not know that much about it. I’ve also has this paradoxical feeling like Artificial Intelligence (AI) has snuck up on us out of nowhere, yet it has been around in one form or another for decades. I really needed to get my thoughts about this down, so let’s explore this together.

AI: Part of the culture

The concept of AI, or thinking machines, is no newcomer to our cultural sphere. The term “robot” was first coined back in the 1920s, and the ideas of “Artificial Intelligence” and “Machine learning” entered the scene around 30 years later, only shortly after the first computers were invented.

From friendly droids like R2-D2 or WALL-E to the murderous cyborgs in “Terminator”, AI and robots have long been subjects of fascination in pop culture and always thought of as “just around the corner”.

So why does it feel like AI has come out of nowhere over the last few years? I can’t be the only one who feels like this. I have a theory that I’ve been working through in my head to explore this question, and it can be boiled down to this one sentence “AI skipped a step”. To understand this, let’s first discuss Moore’s Law and the Law of Accelerating Returns.

AI skipped a step

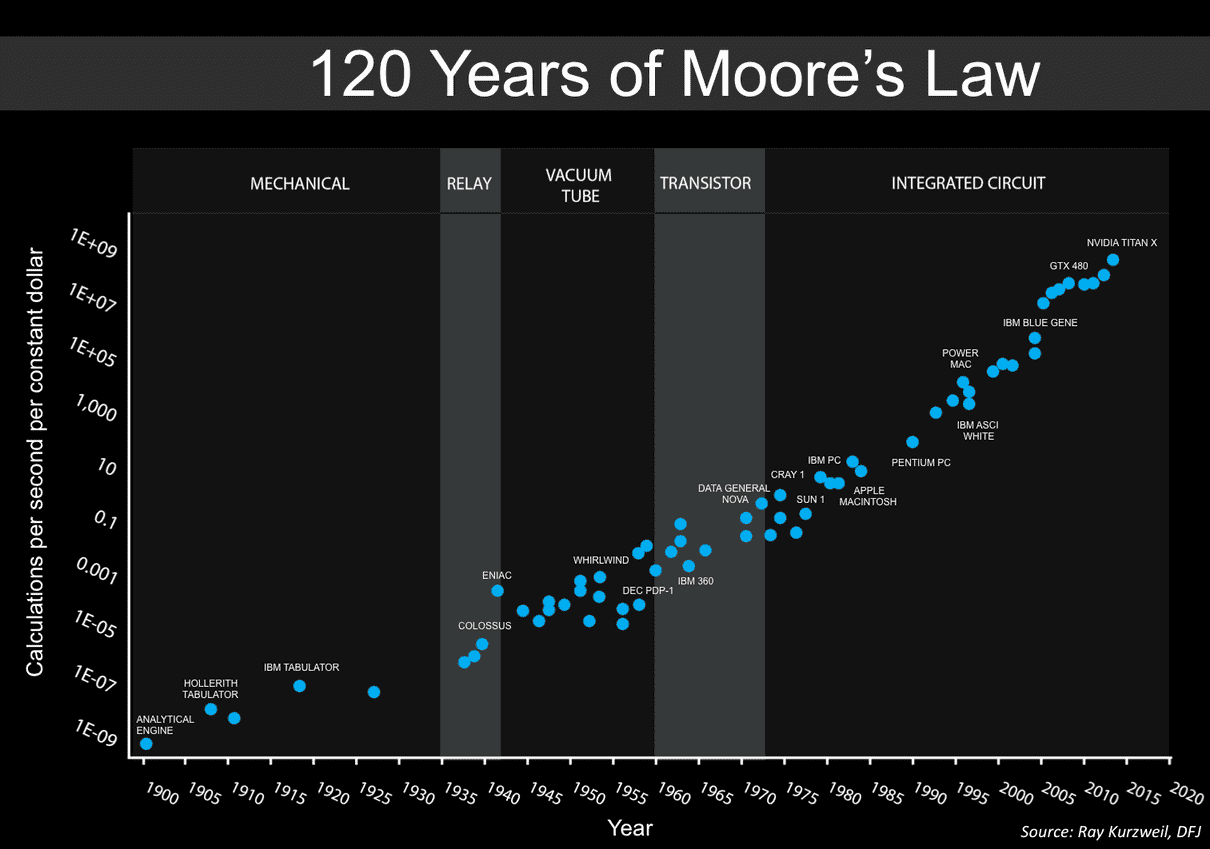

Moore’s Law is the famous prediction made by Gordon Moore, co-founder of Intel, that the number of transistors on an integrated circuit would roughly double every two years, thereby increasing computing power.

This prediction has held true for over half a century, and is only now starting to see signs of slowing down as we make circuit boards smaller and smaller. Exponential growth is very hard to keep up when dealing with the physical world, because you can only make things that much smaller. We may be on the cusp of a whole new paradigm shift with quantum computers starting to make some real headway. Google’s latest prototype proudly proclaims to be faster than the current #1 super computer by 47 years at certain tasks.

Then comes Ray Kurzweil’s concept of the “Law of Accelerating Returns”, first proposed by Ray in his book “The Singularity Is Near”. It is seen as more broader and encompassing than Moore’s law. It suggests that technological progress as a whole (including but not limited to computing) is exponential rather than linear, and that the pace of innovation increases over time due to iterative improvements. Kurzweil’s law doesn’t just apply to hardware but also includes software development, algorithmic improvements, and overall advancements in AI. It also factors in how these advancements might impact society and the economy. Unlike Moore’s Law, the Law of Accelerating Returns doesn’t predict an eventual slowdown or limit to growth.

His concepts in the book are based around these three key points:

- Technology builds upon itself. As we develop more advanced technology, we use it to create even more advanced technology. This is why the rate of technological progress accelerates over time.

- Information-based technologies improve exponentially. As an example, consider Moore’s Law, which observes that the number of transistors on an integrated circuit (and thus processing power) roughly doubles every two years.

- As technology evolves, “order” increases exponentially. Kurzweil posits that as technology progresses, systems become more organised and efficient, which results in the exponential growth of “order” (meaning the organisation and structure in a system).

Boiling it down to it’s bare bones, I like to think of Moore’s law being based in the physical world, because it’s literally talking about the number of transistors on a board. Whereas Ray’s law, explores the digital world, and how it will affect our society.

The 4 steps

When talking about the progress of AI, many theorists discuss four stages of tasks and jobs being replaced by AI and robots: Physical Repetitive, Cognitive Repetitive, Physical Non-Repetitive, and Cognitive Non-Repetitive. Let’s explore these stages to better understand where we currently stand.

1# Physical Repetitive

The first stage is all the physically intensive jobs that have lots of repetition to them, they are monotonous and quite boring to us meat bags. So we quickly put down our tools in this area and handed the mantle over to the automaton. If you imagine a car being built today, you probably think of a massive factory line filled with various robotic arms, moving and putting all the pieces together to form a car. So we have very much so, passed this stage.

2# Cognitive Repetitive

Next up are the tasks and jobs that require more mind over muscle. Are we passed this stage? I think so, because if you load up just about any website these days, you will generally have the option to click a chat icon and talk to a virtual assistant as a first line of customer service. Most are quite primitive at the moment, but it wont take long before companies are using dialled in AI systems to handle 99% of these queries. Other examples include data entry tasks, these are being automated at an exponential rate – and just like if you were to run a car company that only builds everything by hand, you would go out of business quick manually entering reams and reams of data.

Not far away on the horizon is going to be the dreaded AI powered robo-telemarketers, we’re going to be in for a deluge of them so look forward to that.

3# Physical non-repetitive

This is where it gets interesting, because the next stage of AI/robotic development was expected to be the automation of physical, non-repetitive tasks, such as maintenance, firefighting, construction etc. However, this has not quite yet materialised, with only a few examples of such robots being developed for the super-rich like the Boston Dynamics Robot Dog.

4# Cognitive non-repetitive

Jobs and tasks that fit this category are the artistic roles, such as designer, writer even computer programmer. Roles that only until a few years ago, we thought computers would never do. A robot can’t create something new like a beautiful painting, or write exquisite poetry, right? How wrong we were, new generative AI’s like ChatGPT and DALL-E can make more artistic works of art in minutes than a human could in a lifetime.

So everyone assumed this would be the last step that robots would take, meaning that all the artistic and “intellectual” jobs were the most safe. If you click on the links in the previous sentence, you’ll notice that they were written in 2017, just a few years before the AI boom that we’re seeing now. We’re going to see far more artist jobs going before we robo-construction workers.

Conclusion

Just a few years ago, AI in creativity-based fields would have been unthinkable. Yet, today we’re seeing machines creating works of art, literature, and music at an astonishing pace, thereby reshaping our understanding of creativity itself.

This is why I truly believe that it feels like AI came out of nowhere, even to those in the tech world thinking about it. I wrote an article about cool uses of AI only just over a year ago, and it’s already vastly out of date.

The recent AI boom is really starting to shake up the content creation and tech industry to the point that it has made us question what is creativity. Go back a few years and you’ll see many articles talking about how robots will never replace humans in these arenas. However, now even the average person on the street can not deny that creativity can no longer be thought of as a uniquely human trait.

There we are. I just thought it was interesting to explore why it feels like AI came out of nowhere, when it’s been around and played a big part of popular culture for so long.